Respeecher Interviewed in LinkedIn Course ‘Understanding the Impact of Deepfake Videos’

Deepfake videos are a subject of increasing interest and scrutiny worldwide. To this effect, Linkedin Learning has produced a course about synthetic media called “Understanding the Impact of Deepfake Videos'' that aims to provide a comprehensive overview of deepfake technology (AI content generation).

Respeecher was invited to provide insight into deepfake audio, how it’s produced, its current and future use cases. Read on to find out the exact section of the course we’re featured in and watch our own Grant Reaber dive into the topic of AI voice generation.

Senior staff instructor Ashley Kennedy explains what deepfake videos and deepfake audio are, and delves into the impact of this technology, its dangers and benefits, how people can learn to identify synthetic media, and what to do when we suspect such content.

Ashley Kennedy is a Managing Staff Instructor at LinkedIn, leading the team of Staff Instructors in the Business and Creative Libraries at LinkedIn Learning. She is involved in course creation, instructional design, and course production and she also created her own courses on various topics (including video, filmmaking, storytelling, social media marketing, education and more).

Ashley was kind enough to interview Respeecher’s Chief Research Officer Grant Reaber in the section called “What is Deepfake Audio”.

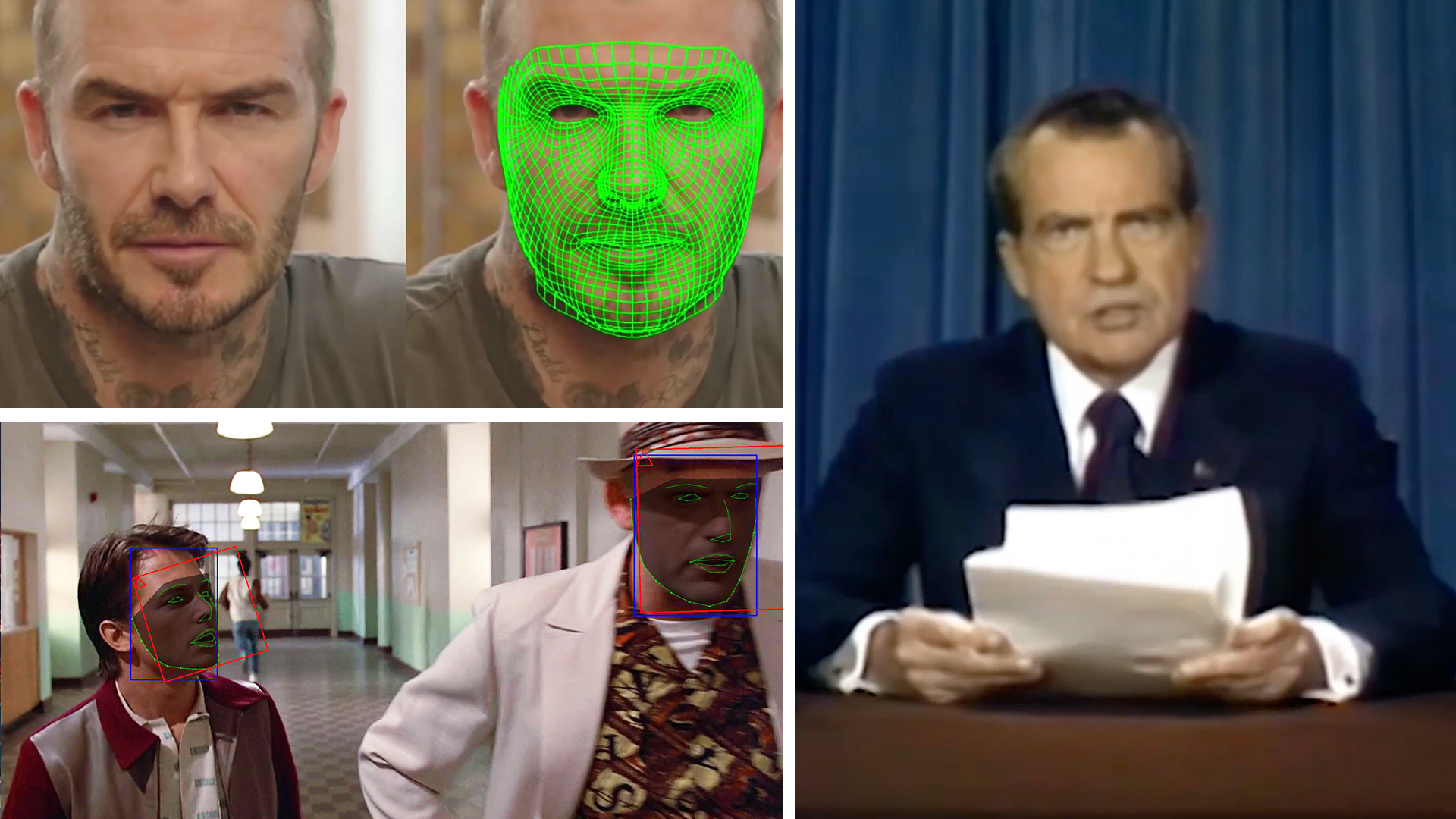

Generally speaking, synthetic media (also known as ‘deepfakes’) consists of manipulated media (video and/or audio) that uses AI to replace a person with someone else's liking and made to appear as if they said or did something that never happened.

Because while some ethical problems regarding AI voice cloning are simple, others are more difficult. We don't simply trust our gut to tell us what we should do. Our decision-making is guided by this set of ethics principles you can read all about on the Respeecher FAQ page.

Companies from the synthetic media landscape like Respeecher aim to revolutionize the way content is produced, by bringing more flexibility in industries like entertainment, video games, advertising, and more. Even so, unethical deepfakes can be used for negative purposes to mislead audiences. This is why part of our mission at Respeecher has always been to:

- Educate the public about the capabilities of synthetic speech technology.

- Develop automatic detection algorithms that can detect synthetic speech even if it has not been watermarked by us.

- Work with gatekeepers of content such as Facebook and YouTube to limit the harm of voice cloning by bad actors through prominent labeling of all synthetic content and banning of particularly unethical content.

So you can imagine our excitement when Ashley reached out to us.

The course “Understanding the Impact of Deepfake Videos” coveres skills like: compositing, video production, media psychology, and visual effects. So far, over 65,000 members liked the content and over 180,000 enrolled in the course. And these numbers keep growing.

A better understanding of voice synthesis and its benefits

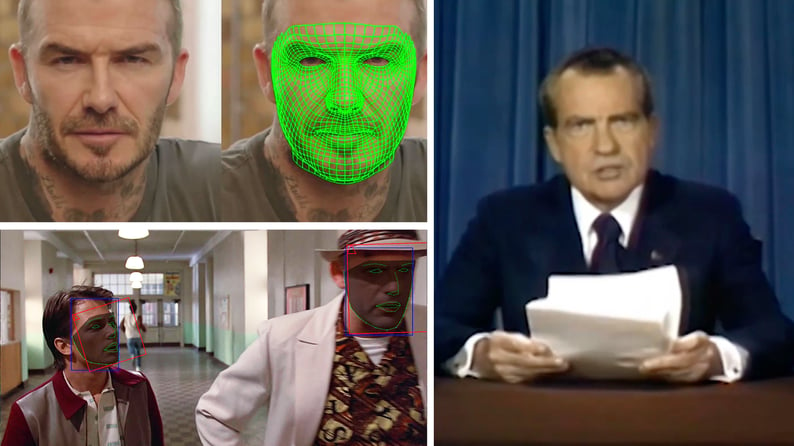

In the piece called “What is a deepfake audio” from the first part of the course you can watch our own Grant Reaber talk about voice synthesis, give a few examples of synthetic audio content, and use the voice of some famous politicians like Barack Obama and Richard Nixon.

Through speech-to-speech voice conversion technology (STS), the general speech patterns of the speaker remain unique, but their voice is replaced with another. It can be successfully used to gain more control over the emotions that are being expressed. STS delivers a natural performance, keeping the inflection and the other characteristics of the human speech.

Expressivity and this subtle control over the performance is one reason to use voice conversion. So, basically, an actor can express themselves just as much through voice conversion as they could if they were using their own voice. It's just sort of like an additional creative tool where you can change the voice of the performer.

Respeecher works with movie studios and video game developers, and helps content creators generate speech that's indistinguishable from the original speaker. In fact, the usage of AI voice cloning includes:

- making changes to dialogue in films;

- making voiceover updates for educational videos and other media;

- improving voice quality for individuals who require enhancements;

- reducing foreign accents in real time;

- providing a voice to people who have lost the ability to speak.

Respeecher will never use the voice of a private person or an actor without permission, but we can use, for example, the voices of historical figures and politicians. We also choose to watermark our audio.

Conclusion

Respeecher started from the idea of cloning human speech and swap voices for the entertainment industry: filmmakers, TV producers, game developers, advertisers, podcasters, and content creators of all types.

The company was founded in 2018 by 3 friends and colleagues: Alex Serdiuk, Dmytro Bielievtsov, and Grant Reaber. In October 2019, we completed the Comcast NBCUniversal LIFT Labs Accelerator and in March 2020 Respeecher received $1.5 million in funding.

We'd love to hear your voice. (We promise not to replicate it unless you give us permission.) If you want to learn more about our technology or see how we can partner up on a project, drop us a line.

FAQ

Deepfake technology uses AI to manipulate audio and video, creating synthetic media by replacing someone's likeness or voice to make it appear as if they said or did something they didn’t. Deepfake technology benefits include creative flexibility, especially in entertainment and advertising.

Deepfake technology in marketing enables AI-powered personalized marketing, allowing brands to create targeted, immersive ads. It can enhance customer engagement with realistic, AI-generated content and improve synthetic media in marketing by using famous voices or faces without needing their physical presence.

Ethical concerns around deepfake technology involve misuse for disinformation, fraud, or manipulation. Deepfake concerns and protection include creating fake news, impersonating public figures, and spreading misleading content, emphasizing the need for ethical AI standards.

Respeecher adheres to ethical AI standards by requiring explicit permission to use voices for AI voice cloning applications. They also watermark their audio and develop automatic detection algorithms to prevent misuse, ensuring that their technology is used responsibly in entertainment and beyond.

AI voice cloning can benefit industries like entertainment, advertising, video games, and education. It enables content creators to clone voices for dialogue updates, voiceovers, and personalized marketing without needing the original voice actor.

Respeecher’s AI voice cloning uses speech-to-speech voice conversion technology (STS) to replace a person's voice while maintaining speech patterns, inflections, and emotions. It allows creators to generate voices indistinguishable from the original, facilitating more creative freedom in AI-driven content creation.

Respeecher and others in the synthetic media industry work on developing deepfake detection algorithms to identify manipulated content. Safeguards include watermarking audio files and collaborating with platforms like Facebook and YouTube to label synthetic media and prevent unethical deepfake applications.

Ethical deepfake applications include using the voices of historical figures or celebrities for educational content, films, and video games. For example, Respeecher helps bring historical voices back for documentaries and entertainment without exploiting the original person's likeness or voice without permission.

Glossary

Deepfake technology

AI voice cloning

Synthetic media

Ethical AI practices

Generative AI tools