Digital Humans: A 2021 Artificial Intelligence (AI) Trend Explained

Here at Respeecher, we love talking about innovative tech like AI content generation, synthetic speech, or how to make Mark Hamill look 35 years younger. As it turns out, all of these topics are symptomatic to the rise of synthetic media. SM is a trend that has burst into the entertainment, business, and even education sectors. Let's talk about digital humans.

Digital humans definition: who and what are they?

Don't panic. Let's set the topic of cyborgs and transhumanism aside. In fact, the concept of digital humans has a more mundane meaning. A digital human is most often understood as any digital avatar that allows its operator to completely change their identity to a virtual one. With virtual human identity, you can use it as a real person’s digital representation or create a unique character and script an identity.

There are many reasons why a person might turn to a digital avatar. The simplest being when there is a need for anonymity. More complicated reasons include being involved in the production of content such as films or TV shows.

Digital human identities can be implemented just as effectively in video games (look at how superbly Keanu Reeves was digitized in the latest Cyberpunk 2077) and by VTubers for video blogging.

It's not just the entertainment industry that is turning to digital human and hologram technology. Businesses are actively using these technologies to create virtual consultants and improve their client experience at every stage, from prospects to paying customers.

A brief history of digital humans that look just like us

The concept of “digital human” was brought to life by Hollywood. For the first time, we encountered the most plausible use of the technology in films like Planet of the Apes (2001) and Avatar (2009). The development of 3D modeling and animation technologies allowed for transforming real actors into digital characters, who were not even human in both cases.

Later on, we saw the trend successfully implemented by video game studios. The video game Beyond Two Souls (2013), developed by Quantic Dream, featured real actors (Willem Dafoe and Caroline Wolfson) that were transformed into their digital copies and became playable on a console and PC.

It is interesting that while working on movies or video games, studios have to synthesize the actor's digital avatar and a new AI voice for when a character is different from a real actor. On our website, you can find more information about the process of synthesizing a unique voice for digital humans in movies and applications of voice cloning for video games.

The digital human industry has made significant headway in recent years. This is largely due to the emergence of software that has allowed people to create digital identities without having access to studio equipment or a sizable budget.

How digital humans are made: an overview of the technology

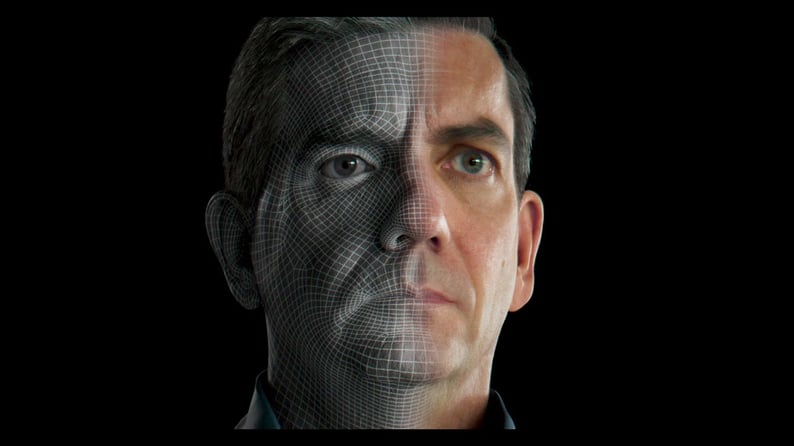

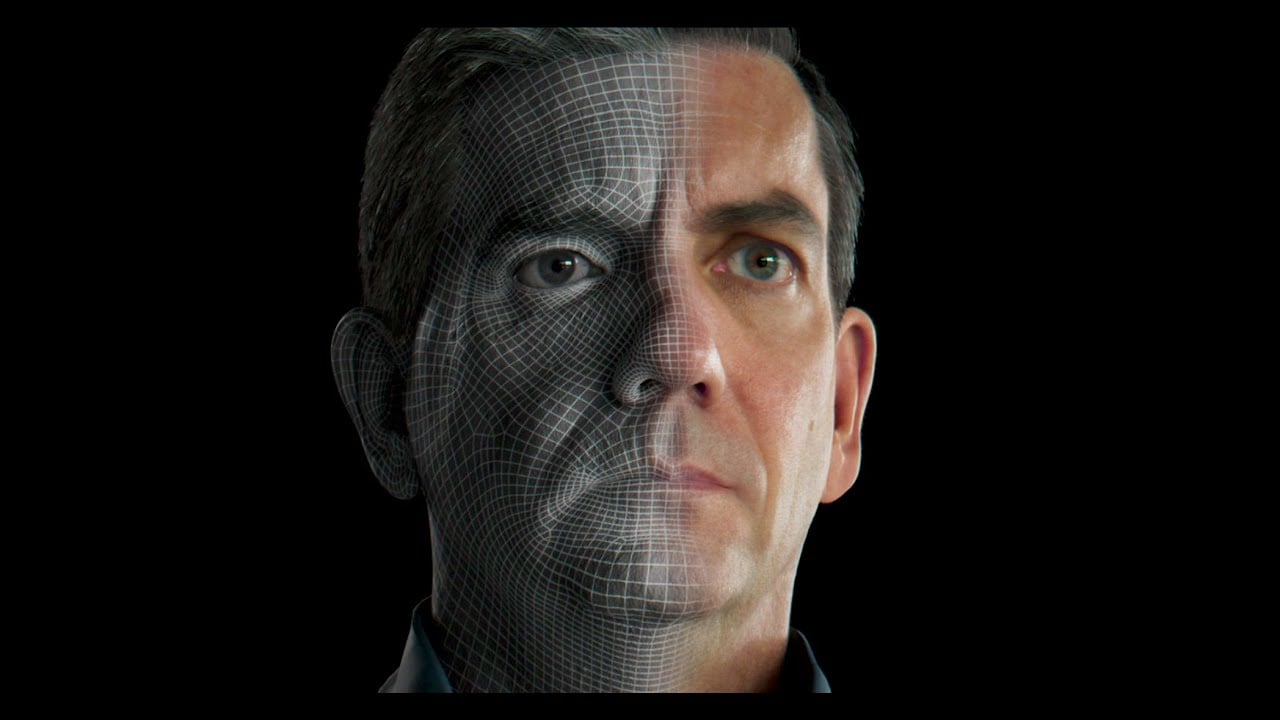

The process of creating a digital human identity consists of 3 critical ingredients: model creation, motion capture, and real-time graphics. If you’ve ever watched real-time digital human streaming, you probably wouldn’t be surprised to learn just how much is going on behind the scenes.

The actor must wear special markers to create a 3D model of their face. Their face and body movements require constant filming by several cameras, which transmit every frame and angle of those movements to the system responsible for rendering the digital character. Let's take a quick look at each step.

1. Model creation

Technically, there are two ways to create a brand new virtual identity. If you want to use an animated cartoon personality, you should start by drawing your new character from scratch. But when it comes to a digital human identity, you will need to start with a motion capture setup.

Typically, it has to be a highly controlled stage with multiple, high-resolution cameras and controlled lighting. There is software available that can help you achieve a similar result with just a mobile device or laptop. Of course, a model made with a webcam or phone cannot compare to a model created in a professional studio.

When the digital model of a human's face and body have been compiled, it’s time to set the animation into motion.

2. Motion capture

For a virtual avatar, the model will be 'driven' in real-time by the actor's facial movements. Because the 3D avatar does not rely on any specific person, any actor can create the foundation for a digital character model to work.

The character will always remain themselves, no matter who the actor is. To capture an actor's motions, you will need a special suit with motion sensors. A typical motion capture suit can be found for around $2,500 - $3,000.

3. Real-time rendering

And the final ingredient, real-time graphics processing. Since the digital footage and realistic graphics have to be animated in real-time, you’ll be needing a powerful computer and a special software engine that combines the actor’s motions with the avatar’s 3D model.

Usually, this can be done using the same engines game developers use for video games. The only difference is that in a video game, all character motions are pre-scripted. But with digital humans, the footage has to be rendered in real-time.

To summarize, we encourage you to watch this TEDx speech by Doug Roble. Here you can observe a direct example of how the technology operates in action.

Industry use cases: today and the future

We have already mentioned movies and video games. Technologies that allow for synthesizing digital people (not just video, but also voice) and using them for entertainment purposes are now in demand more than ever.

The growing VTuber trend and synthetic film and TV media, in general, suggest that the time for universalized media services is not far off. With products and agencies like Respeecher and Hololive Production, we can soon expect to see a news broadcast featuring a single digital avatar speaking with the same voice, but in all possible languages.

Imagine what opportunities this opens up for educational projects. The real Thomas Jefferson will be able to talk about how the Declaration of Independence was created. And Neil Armstrong can share his impressions of his first step on the moon at the exact moment it is happening on screen. What was once an old book story can now be transformed into an authentic interactive film or TV show.

Aside from cinema and game studios, who is the fastest adopter of this technology today? The answer may surprise you, but as it turns out, it’s more along the lines of traditional business.

Recently, sensational chatbots have given way to full-fledged digital video assistants. The combination of artificial intelligence, chatbots, and digital human avatars have made it possible to build a unique service that is likely to soon change the customer service industry.

IBM recently released its Watson Assistant, a service on the IBM Cloud to help anyone build and deploy virtual assistants. The AI-powered technology from IBM allows users to program virtual assistants that go far beyond average chatbots.

Some companies like Kia have already started using IBM's technology and digital humans in their car shops. Brands like ANZ, Sony, P&G, The Royal Bank of Scotland, and Mercedes-Benz have already implemented AI digital humans as parts of their customer service.

Other industries that will be disrupted by this technology during the next decade are:

-

Banking and Finance

-

Software and Technology

-

Automotive

-

Healthcare

-

Energy

-

Education

From teachers and sales representatives to customer support and news anchors, entire industries will be influenced by AI-powered digital human technology.

If you are interested in using a digital human in your business or entertainment project, keep in mind that you will also need a good speech synthesizer. Respeecher's AI voice generator technology successfully works with many studios, allowing them to endow digital characters with the voices of real people and synthesize dubbing in multiple languages, thus expanding the availability of their content.

FAQ

Digital humans are virtual avatars representing human identities. They can be used for virtual identity creation, synthetic media applications, and in entertainment or customer service, allowing complete transformation into digital personas for anonymity or content production.

Digital humans are created through three key technologies: model creation, motion capture, and real-time rendering. This involves using motion capture technology to track an actor’s movements and applying those to a digital avatar, while real-time rendering creates the final realistic visuals.

Motion capture technology uses specialized suits with sensors to track an actor’s movements, including facial expressions and body movements, which are then translated into a 3D digital model. This process involves high-resolution cameras and controlled lighting for accuracy.

Industries like entertainment, video games, customer service, and marketing benefit from digital avatars. These avatars are used in movies, VTubers, virtual assistants, and advertising, enabling virtual interactions and improving customer experience.

Respeecher’s AI voice generator technology helps create realistic voice synthesis for digital humans, allowing them to speak in multiple languages and use voices of real individuals, enhancing virtual identities in entertainment and customer service applications.

Real-time rendering involves the processing of 3D models and animations in real-time, allowing motion-captured performances to be instantly integrated into the digital human’s avatar. This technology is essential for live-streaming and interactive digital human experiences, used in entertainment and business.

VTubers use digital human technology to create virtual avatars that represent them in live-streamed content, enabling creators to maintain anonymity while interacting with audiences. These avatars are controlled using motion capture and real-time rendering to enhance live performances.

Ethical concerns with digital human technology include issues of identity manipulation, potential misuse for deepfakes, and consent when creating virtual avatars. Additionally, there are concerns around how digital identities are used in marketing, entertainment, and customer service without transparency or misuse.

Glossary

Digital humans

Motion capture technology

A method for capturing actors' movements to create digital humans and virtual identities for synthetic media applications, real-time rendering, and entertainment.

AI voice generator

A tool that creates realistic voice models for digital humans, enabling virtual identity creation, synthetic media applications, and enhancing AI in customer service.

Synthetic media

Real-time rendering

.png?width=477&height=264&name=Text-to-Speech%20Market%20Trends_%20What%20Businesses%20Need%20to%20Know%20(1).png)