Deepfakes in the Workplace: How Synthetic Media Improves Life at the Office

With deepfakes, things can get complicated. Just as with voice cloning, the public’s attitude tends more towards hostility rather than optimism.

We know AI voice cloning technology can be dangerous and that's why Respeecher is following a strict set of principles that guides the decision-making process. You can read more about it on the Respeecher FAQ page.

At the same time, if it is possible to minimize the “fake” component and introduce it into the legislative field, deepfake generator technology has a number of benefits it can deliver to the workplace on a daily basis.

With this in mind, let’s go through the negative and positive aspects of synthetic media for the future of work.

What are Deepfakes: Examples of Synthetic Media

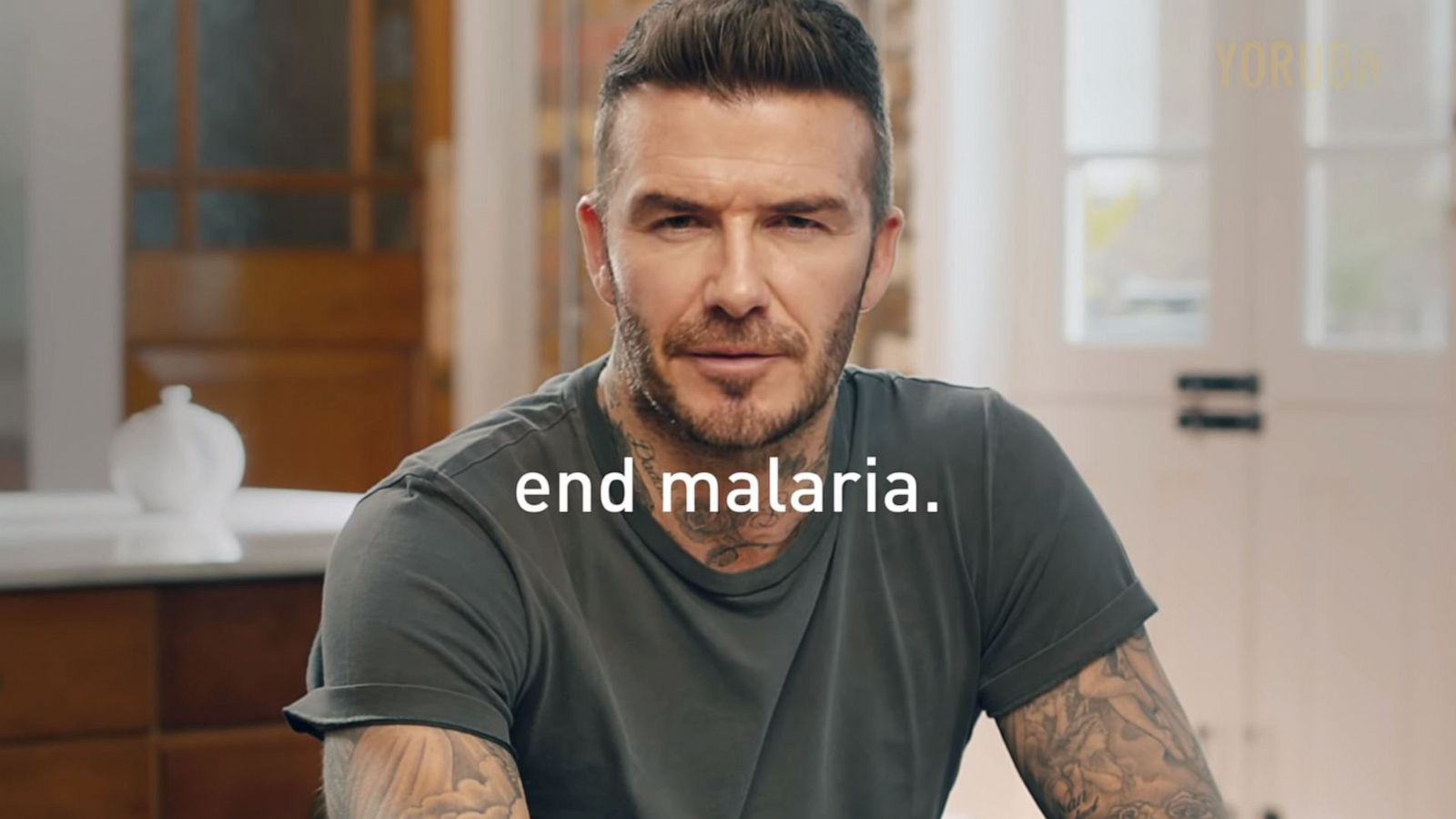

Deepfake is a video created using artificial intelligence such as AI deepfake generator. To put it simply, a neural network assembles a video based on ready-made images. For example, it studies thousands of photographs of David Beckham and releases a video in which he encourages people to fight against malaria.

Another example is when generative AI “mixes” one character with another and you get a combo: Jim Carrey as the villain from the movie “The Shining” or Brad Pitt as the Terminator.

In 2019, the Dali Museum in Florida “revived” Salvador Dali for his 115th birthday. The authors used deepfake technology which allowed "Dali" to interact with the museum’s visitors.

Deepfake technology often contains voice cloning that allows for any person's voice to be artificially recreated and then used in such a way that it sounds like the voice of another person - with their consent, of course. Ethical deepfakes applications also have the potential to revolutionize the entertainment industry by providing new avenues for creative storytelling and character development.

This way, it is possible to improve or rejuvenate someone’s voice. With the help of our voice synthesis software, the NFL brought an American football legend, Vincent Lombardi, back to life on screen this year.

Another famous ethical deepfakes example is when the DDB agency and Respeecher partnered to revive Rivera Morales’ memorable voice with the help of an AI deepfake generator. The result was a beautiful performance in which the voice of Rivera Morales narrated the Puerto Rico match against China.

Voice cloning also allows for a voice to be de-aged, as we did with Mark Hamill’s (Luke Skywalker) voice in The Mandalorian.

The Dangers of Deepfakes

When in the wrong hands, deepfakes can cause many unpleasant situations. There have been several cases of deepfake being used to discredit public figures.

For example, in the spring of 2019, a deepfake video with the Speaker of the US House of Representatives, Nancy Pelosi, was published on the Web. The author of the video, with the help of AI, changed Pelosi's speech so that she could not pronounce words well, and users who watched the video thought that the politician was drunk.

The situation turned into a loud scandal, and only after a while was it proved that Pelosi's speech was generated by AI.

This is only one example of deepfake being used to create damaging outcomes.

“Drunk” Nancy Pelosi, Trump against climate initiatives, and Obama calling Trump a complete dip**** demonstrates the potential this generative AI technology has to create chaos when in the wrong hands.

It is not easy for an ordinary user to distinguish between the edited video and the real one.

Many people believe that spreading false information, invading privacy, and ruining someone’s reputation are just minor consequences of what deepfakes may eventually lead to. Luckily, things are not this simple. With the rise of deepfake maker tools, there is a growing awareness of the potential dangers associated with manipulated media and a concerted effort to develop safeguards against misuse.

To help better understand the impact of deepfake technology, Linkedin Learning has produced a course about synthetic media called “Understanding the Impact of Deepfake Videos'', aimed at providing a comprehensive overview of deepfakes.

Deepfake Technology Use Cases

AI voice cloning technologies themselves are neither good nor bad — it all depends on how they are used. The positive outcomes of technology are too many to name but some obvious ones include the ability to reanimate well-known actors who have grown old or passed away. Deepfake may serve as a safety net for actors during the process of filming dangerous stunts.

Deepfake technology can also be used as a powerful interactive tool for promoting films with huge viral potential, where anyone can play the role of Superman or Batman.

There are a lot of examples of leveraging deepfakes in advertising. As we have already mentioned before, Beckham “starred” in a social video and got a chance to speak nine different languages to inform more regions about the dangers of malaria through an AI deepfake generator.

The British company that shot the video prefers to call it “synthetic media” rather than "deepfake" to avoid negative connotations.

Users also leverage this generative AI technology to draw attention to social problems. The hacktivist Bill Posters creates deepfakes with public figures such as Zuckerberg, Kardashian, and Mark Freeman and shares them on social media.

One of his most recent works is a teaser for a fictional TV project with Jeff Bezos, the head of Amazon. In this way, Posters tries to draw attention to the burning forests of the Amazon.

How to Detect Deepfakes

The accuracy and high quality of deepfakes have resulted in an increased distrust of video content by web users. However, on closer inspection, some of the videos show digital artifacts — flaws. Every deepfake contains flaws that can be detected in the same areas of the face, highlighting the importance of developing robust detection methods for deepfake maker technology.

Eye color. Mixed or different colors can be a signal for recognizing a deepfake. Also, the distance from the center of the eye to the edge of the iris must be the same for both eyes. In addition, both pupils have to have correctly rounded contours.

The boundaries. Inaccurate masking can result in the contours of the mask being sharply separated. This becomes clearly visible at the bottom of the face and above the eyebrows.

Glares and teeth. On many deepfakes, you may notice that a particular glare in the eyes is absent, and thus, the eyes have a dull appearance. Another important variable is unremarked teeth that appear as separate white spots.

Light. One of the methods for detecting deepfakes is comparing light reflected in the corneas. In a photograph of a real person taken with a camera, the reflection in both eyes will be the same. Images generated as deepfakes cannot accurately convey this similarity.

How to Leverage Deepfake Technology in your Office

Have you ever imagined that instead of showing up at your next meeting in person or via Zoom, you could just send a realistic virtual simulation of yourself to your colleague or partner?

Well, for some companies this is a reality.

Partners of the British company EY (formerly Ernst & Young) from the Big Four of Auditors have started using deepfakes to communicate with clients instead of face-to-face meetings.

Deepfakes are used in customer presentations and emails. One of the partners, who does not speak Japanese, created a deepfake version of himself speaking to a client in Japan in fluent Japanese!

Important: Customers are always warned that they are looking at a video created by artificial intelligence, and not a real person, ensuring the ethical use of deepfake generators.

Deepfakes allow companies to:

-

Reach clients in multiple languages

-

Create training videos for employees and customers

-

Promote your company all around the world without travel and other expenses

Synthetic media also helps companies accelerate the process of creating images for e-commerce and marketing: to modify stock photos, create models to showcase new clothes, and much more.

In the past, brands had two choices: hiring a huge team of creatives or buying stock photos. Now portfolios can be created by an algorithm. This is especially helpful for small companies that do not have large marketing budgets.

In Conclusion

In terms of the pandemic and ever-changing conditions, keeping up with all the innovations, outpacing your competitors, and developing your business is a 24/7/ responsibility.

Deepfake technology based on voice cloning helps save time by “automating”, and at the same time, personalizing your customer relationship management.

Use technology wisely to get the most out of it for your business’s success.

FAQ

Deepfakes are AI-generated media, often videos, created using a deepfake generator. Voice cloning and AI deepfake generators utilize neural networks to create realistic synthetic media by combining images, sounds, and data to mimic real people, enhancing entertainment or advertising applications.

Synthetic media, like AI-powered media generation and deepfake videos, can help businesses create engaging, multilingual content without costly production. AI voice cloning also enables personalized, branded customer interactions and cost-effective advertising solutions, improving customer engagement globally.

Ethical concerns include the potential for misinformation and manipulation. Deepfake technology can be misused to create harmful content, such as fake news. Responsible use is essential, with deepfake detection methods helping ensure that the technology remains ethical and transparent.

Voice cloning enhances deepfake applications by allowing the replication of real voices, enabling more immersive and authentic experiences. This can be used for ethical deepfakes in entertainment, such as bringing back iconic voices or creating realistic voiceovers for films and advertisements.

Industries like advertising, entertainment, and education can benefit from deepfake applications. AI-powered synthetic media can create interactive deepfake applications, improve AI dubbing for movies, and facilitate AI-powered localization for global audiences.

Deepfakes can be detected using advanced methods like analyzing eye color, lighting inconsistencies, and digital artifacts. Tools that identify flaws in facial features, like mismatched pupils or unnatural lighting, help identify AI-generated media and prevent misuse of synthetic media.

Examples of ethical deepfakes include the resurrection of voices like Rivera Morales or Mark Hamill’s de-aged voice in The Mandalorian. These use AI voice cloning to revive famous figures for educational or entertainment purposes without causing harm.

Businesses can implement synthetic media ethically by ensuring transparency, informing users when deepfake videos are being used, and avoiding misleading content. AI-powered localization and AI voice tools should prioritize honesty and authenticity in applications like marketing and customer engagement.

Glossary

Deepfake technology

Synthetic media

Voice cloning in AI

Ethical deepfakes

Deepfake detection

Generative AI tools

AI-powered voice cloning

- voice cloning

- deepfake voice technology

- synthetic media

- artificial intelligence

- synthetic speech

- digital humans

- voice synthesizing technology

- deepfake technology

- deepfake marketing

- ethical deepfakes

- voice cloning technology,

- AI deepfake technology

- AI voice

- Mark Hamill

- deepfakes in the workplace

- generative AI

- AI voice cloning

- Respeecher for Business

- Ethics and Security

- voice ai

- deepfake generator

- deepfake maker

- AI deepfake generator